Conditional Generative Accumulation of Photons

Research project on building novel conditional generative model. MSc Thesis 2024

Abstract

Inverse image problems like inpainting, colorization, and super-resolution are ill-posed challenges in computer vision. This project introduces the Conditional Generative Accumulation of Photons (Conditional GAP) model, which integrates conditional inputs to guide image reconstruction under Poisson noise constraints. The model demonstrates robust performance on standard benchmarks, offering diverse solutions while addressing noise challenges. This work establishes a foundation for Poisson-based generative models in complex inverse problems.

Research Problem Statement

The research evaluates the Conditional GAP model’s capability to solve three inverse problems:

- Adapting the model to handle natural inverse problems under Poisson noise assumptions.

- Assessing the impact of conditional inputs on guidance and constraints.

- Quantifying performance through metrics and qualitative analysis.

- Testing diversity denoising for incomplete/damaged data.

Methodology

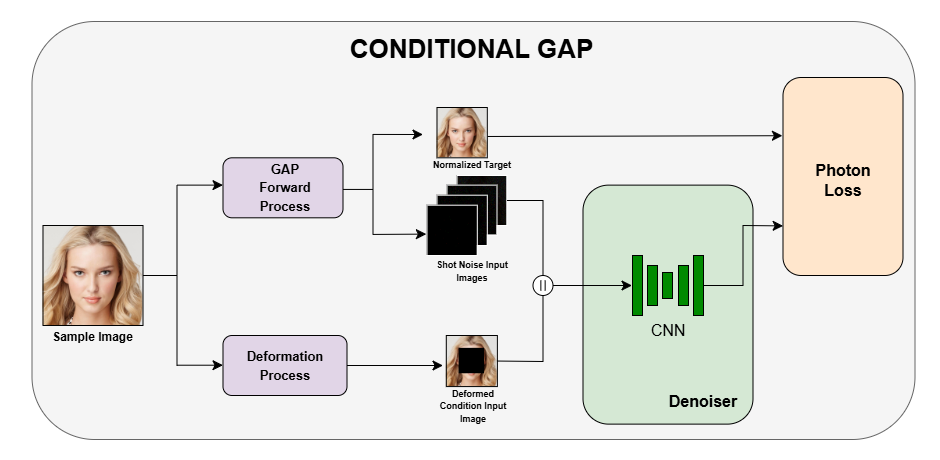

- Framework: Unified conditional framework for inpainting, colorization, and super-resolution.

- Forward Process: Training pairs generated via photon sampling (noisy input → normalized clean target).

- Backward Process: Task-specific deformation matrices create conditional inputs to guide reconstruction.

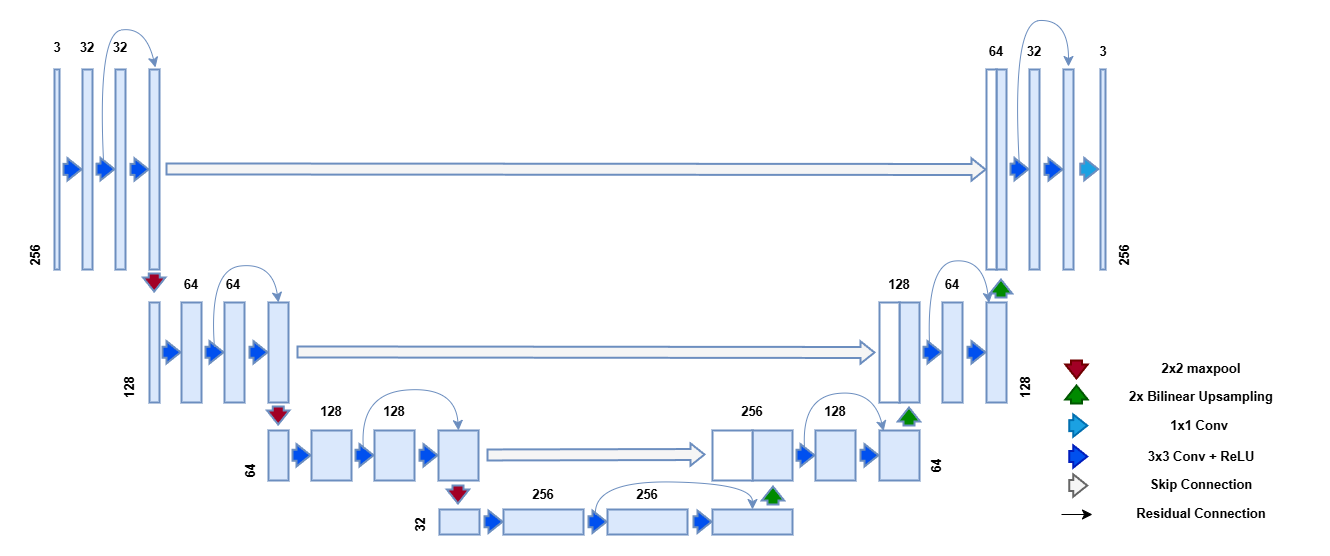

- Architecture: Modified 7-level UNet with residual blocks, bilinear upsampling, and Fourier feature mapping (10 sinusoids).

- Formulation: Conditional GAP model is as follows: \begin{equation} f(y_t, y_c;\theta) \approx p(i = i_{t+1}| y_t, y_c) \end{equation} where \(y_c\) is the additional condition input created by the Deformation Process and f is the CNN parametrized by \(\theta\).

- Photon Loss: Extended cross-entropy loss incorporating conditional input \(y_c\):

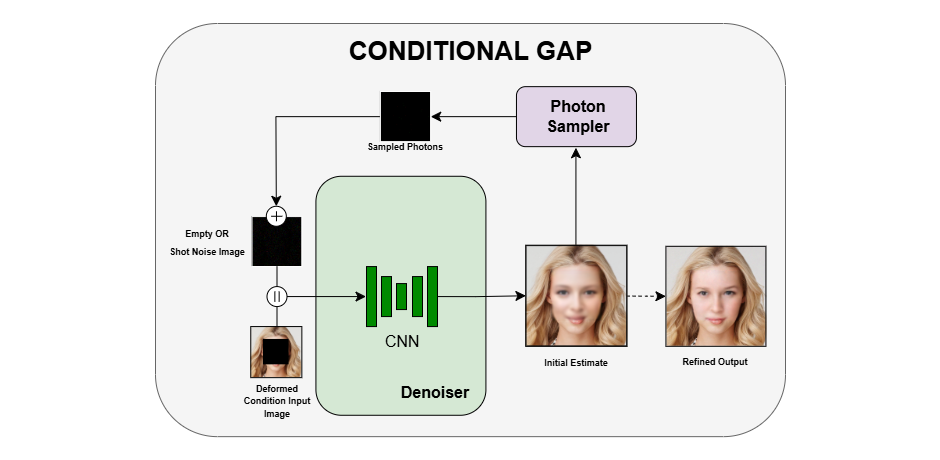

\begin{equation} L(\theta) = \sum_{k = 1}^m \frac{1}{n|y_{tar}^k|} \sum_{i = 0}^n ln f_i(y_{inp}^k, y_{cinp}^k;\theta) y_{tar,i}^k \end{equation} here we define the Photon Loss for the Conditional GAP model that accommodates the additional input \(y_{cinp}\) and \(y_{tar}\) as the normalized target. - Conditional Generation: Iterative photon sampling from noisy/empty inputs to produce diverse solutions.

- Cascaded Training: Five specialized models trained on pseudo-PSNR ranges (e.g., [-40, -30] to [0, 10]) for faster convergence.

Implementation Details

- Datasets: FFHQ (256x256 faces) for training; CelebA-HQ for evaluation.

- Hardware Specifications: The model was trained on an NVIDIA Tesla T4 GPU, which provided the computational power to train complex neural networks with large image datasets.

- Software Specifications: The project is built in python utilizing pytorch lightning for training. For image processing we used Torchvision.

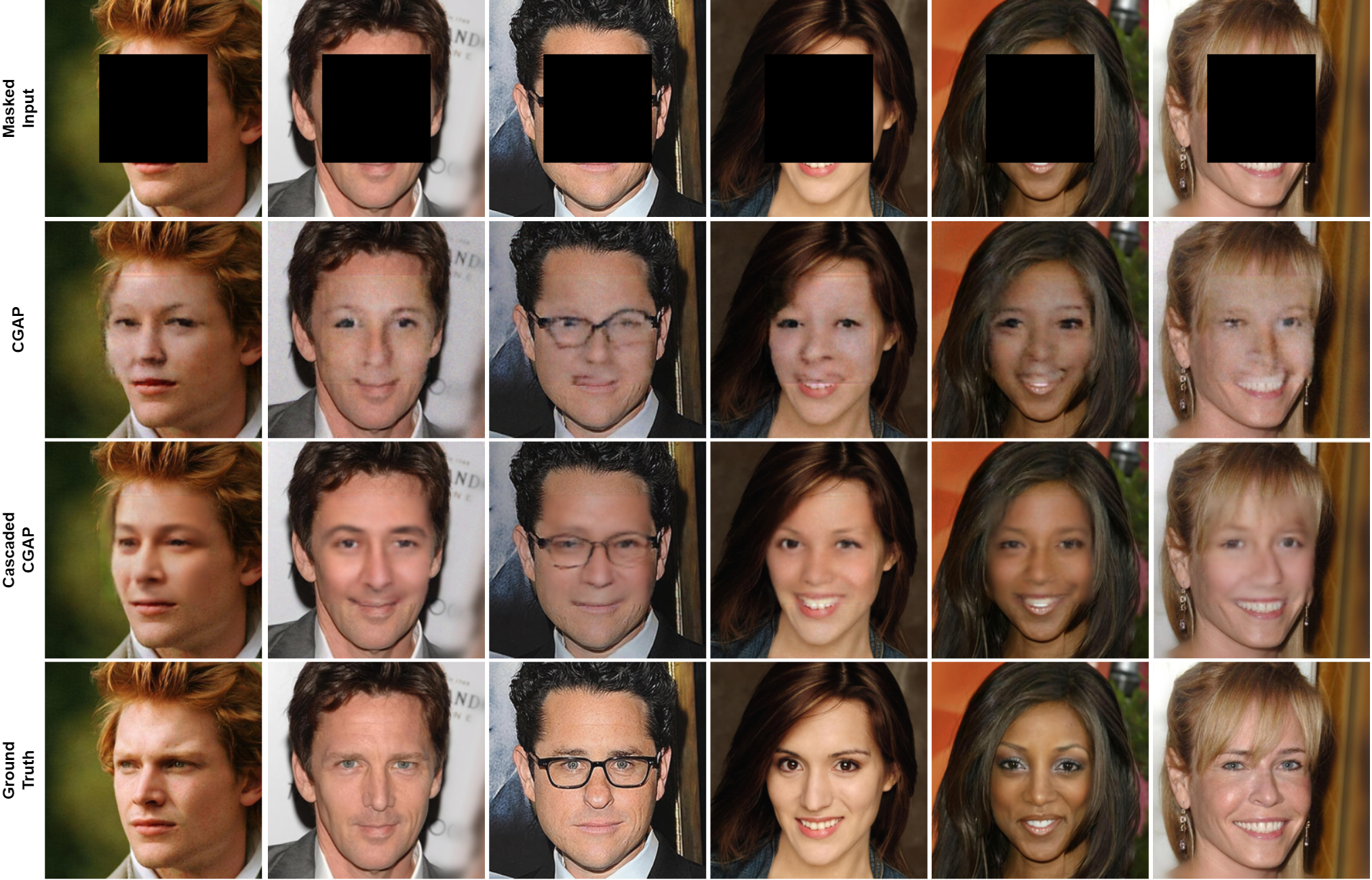

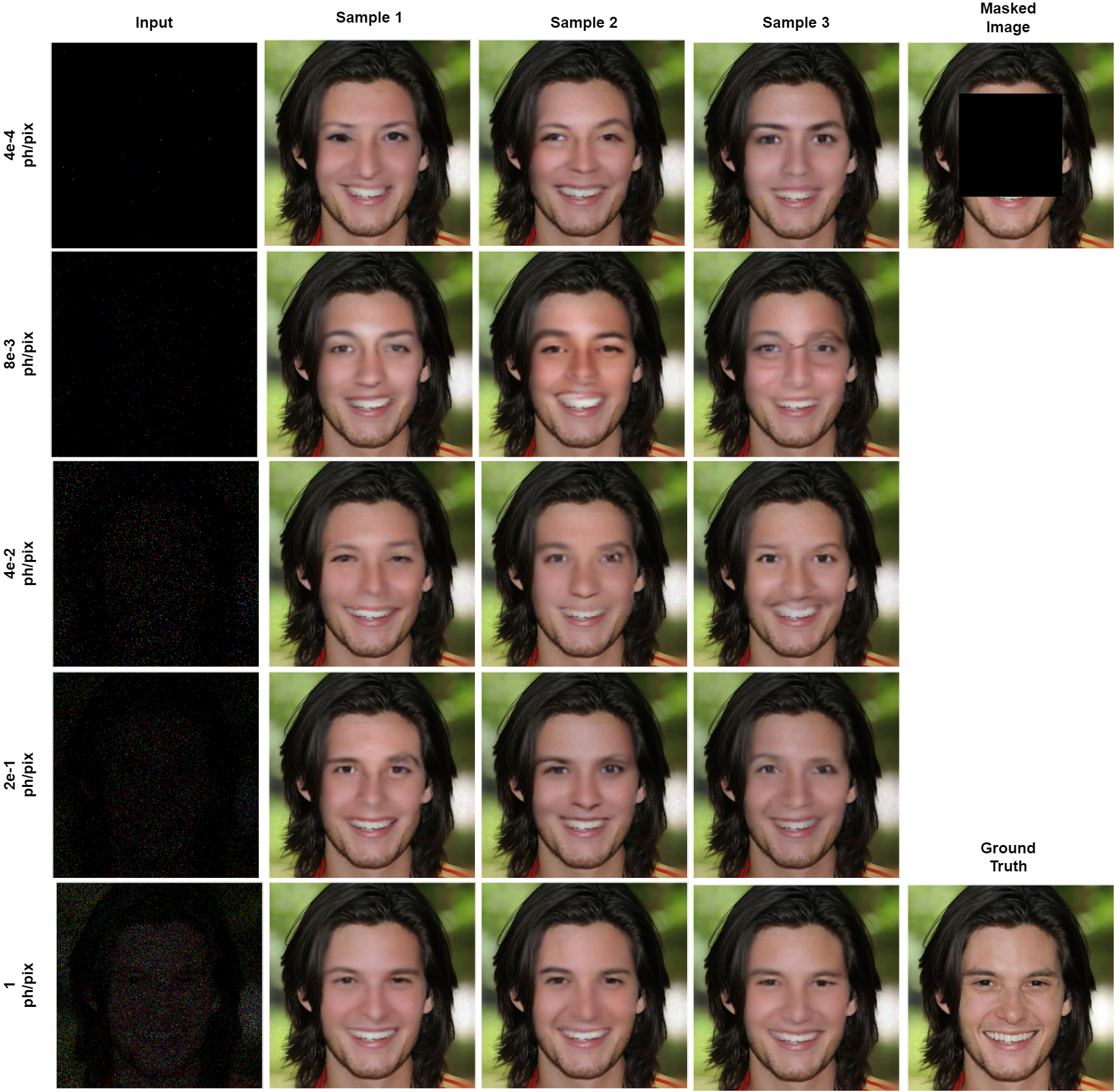

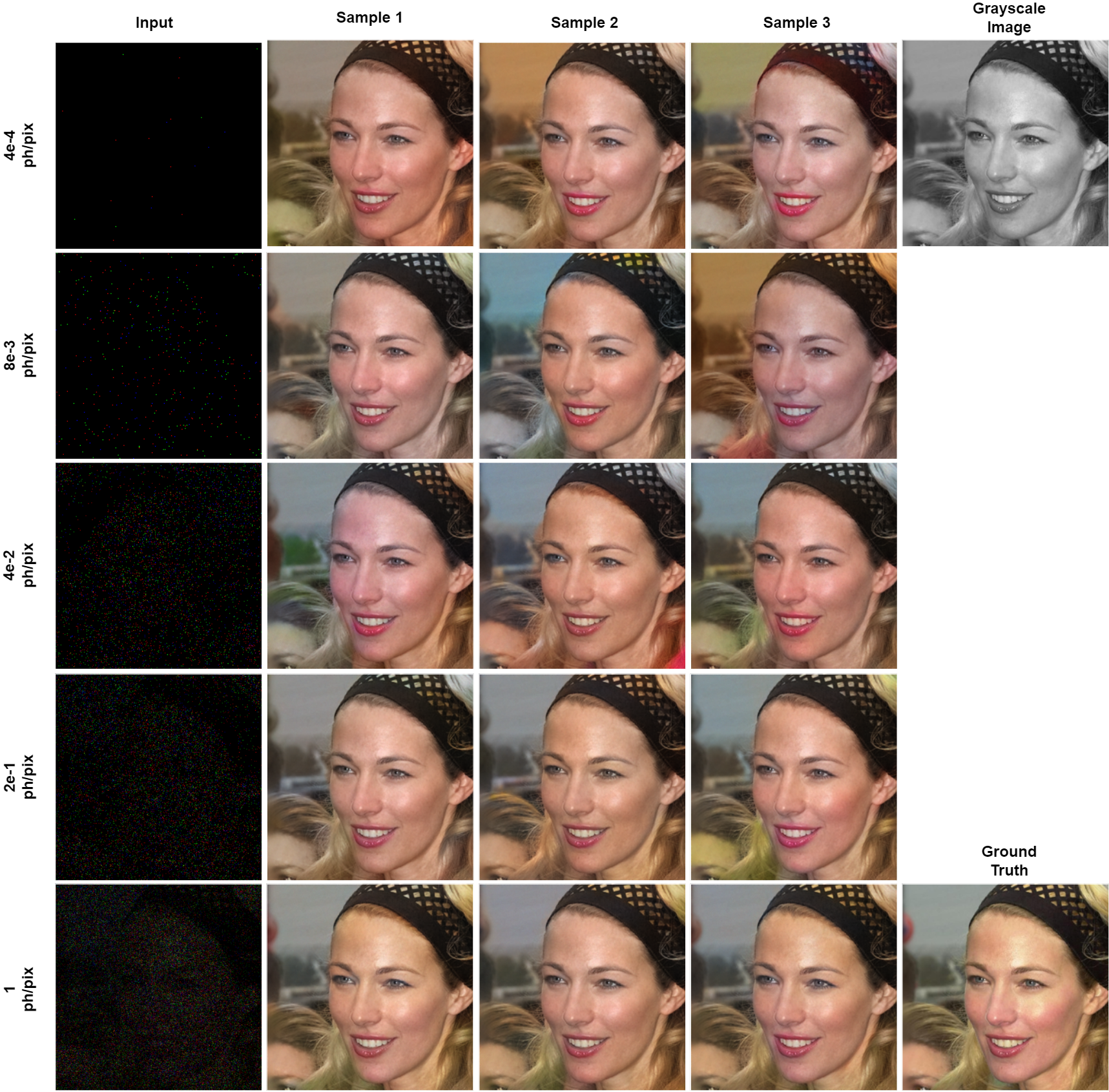

Qualitative Results

Diversity Denoising

Technology Stack

- Python

- Pytorch

- Pytorch Lightning

- TensorBoard

Thesis Report

Want to learn about how drastically noisy images are getting denoised? ![]() Check out my Thesis Report.

Check out my Thesis Report.