Ghost Writer

Multi-Persona AI Workflow for CV and Cover Letter Personalization

Overview

Crafting tailored resume for specific job application can be challenging. While AI offers potential, generic templates or zero-shot prompting often lack the context-awareness needed to truly stand out. This limitation highlights a broader trend in AI: moving beyond simple zero-shot requests towards sophisticated AI Workflows and Agents that leverage tools in a constrained environment to iteratively reason and tackle complex, multi-step tasks far more effectively.

One tangential approach in this direction involves structured “research” before generation. The STORM framework, an open-source writing system designed to generate comprehensive Wikipedia-like articles by simulating multi-perspective research dialogues, exemplifies this. Inspired by STORM’s methodology for building deep understanding, I explored its adaptation for a highly personalized use case: resume writing.

In this post, I’ll detail how I adapted the core principles of STORM to analyse personal documents (like your resume and a target job description). I chose resume writing because it’s a tangible problem where AI-driven, context-aware guidance offers significant advantages over generic advice.

The result is Ghost writer, an open-source Resume Advisor built on these principles. This post dives into the implementation details, explaining the modifications made to STORM and the architecture of the Ghost writer system, offering insights for anyone building advanced, context-aware AI applications.

Primary Inspiration

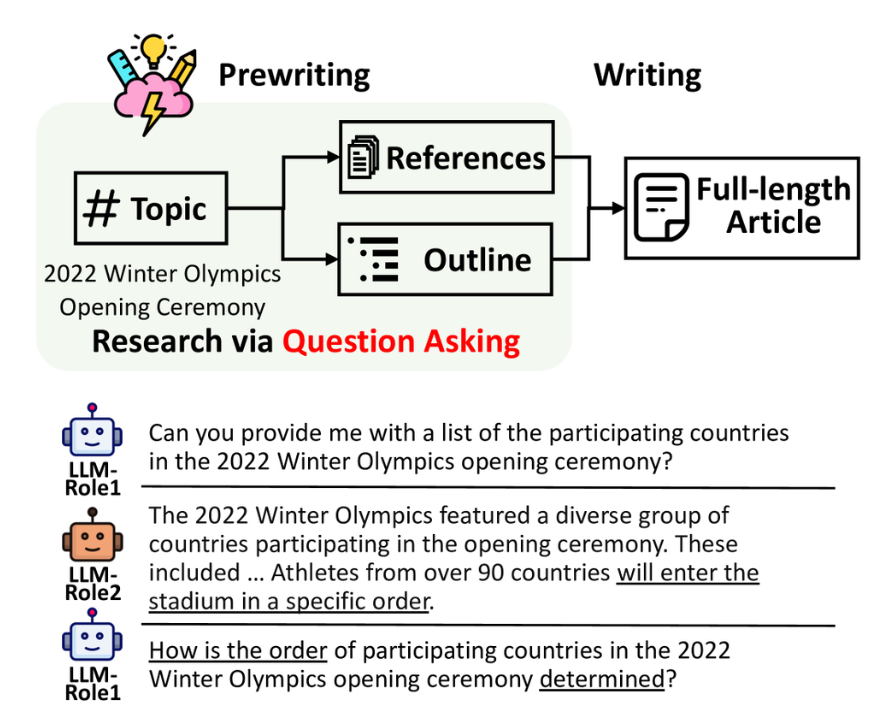

STORM (Synthesis of Topic Outlines through Retrieval and Multi-perspective Question Asking). STORM is an innovative AI system designed to generate comprehensive, Wikipedia-like articles. its main focus is on the pre-writing or research stage.

Instead of directly generating text, STORM orchestrates a simulated conversation. It creates multiple AI ‘personas’, each representing a different viewpoint or area of expertise related to a topic. These personas then engage in a dialogue with an ‘expert’ agent, asking questions to explore the topic thoroughly. This multi-perspective question-asking allows the system to gather diverse information, identify key sub-points, and build a deeper understanding before generating the final article, as shown below:

This forms the basis for Ghost writer. Ghost writer adapts this powerful research simulation approach for a different, highly personalized task: tailoring a CV to a specific job description. Ghost writer employs specialized ‘resume advisor’ personas (e.g., a technical expert, an HR perspective, etc) to analyse the user’s input (resume, job description) and simulate a discussion to identify key areas for improvement. It aims to automate the strategic thinking required for effective resume customization, bridging the gap between zero-shot LLM prompts and expensive personalized resume writing services.

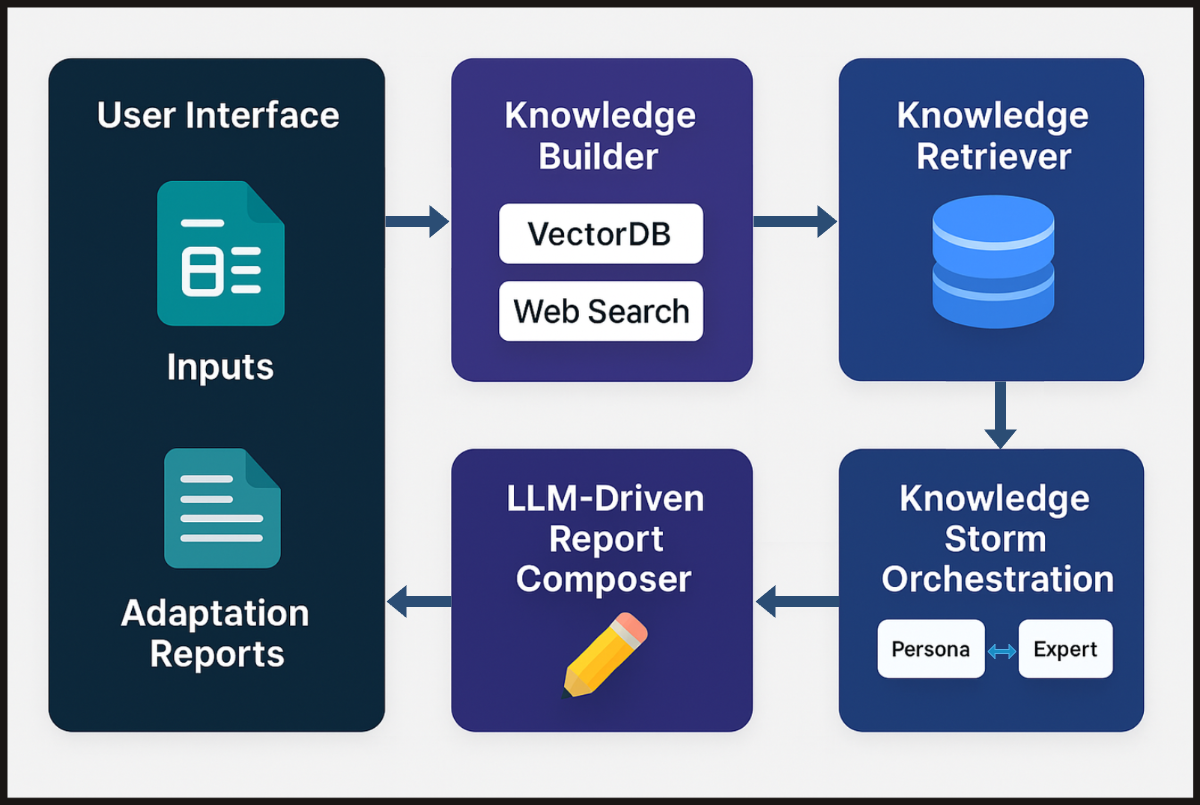

To achieve this, Ghost writer integrates STORM’s conversational framework with Retrieval-Augmented Generation (RAG) and targeted Web Search for company/role context, finally providing actionable advice through a User Interface.

Methodology

- Create Portfolio: KnowledgeBaseBuilder Module

In the first step this module processes the input documents and external sources to create structured profiles for both the “user” and the target “company”.

- Company Profile: Here we aim to go beyond just the job description, using targeted web searches to gather information on company values, recent news, and specific role requirements. This creates a richer understanding of the target context.

- User Profile: Information is extracted from the user’s provided documents (e.g., existing resume) to build a detailed portfolio of skills, experiences, and projects.

This phase populates the system’s knowledge base with the help of RAG.

- STORM Orchestration: Storm Module

This core phase adapts the STORM methodology to simulate a multi-persona review. This module orchestrates the simulation where the personas and the expert have a conversation in a loop:

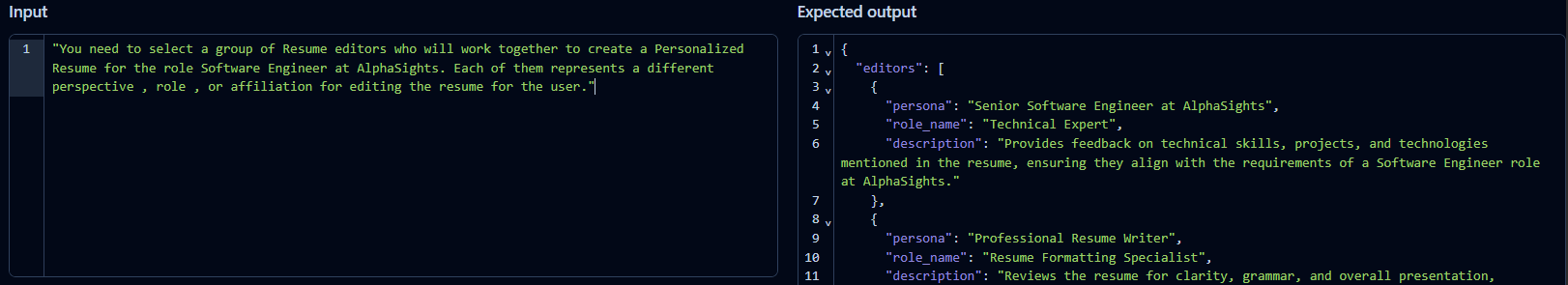

- Persona Generation: An LLM generates diverse ‘resume advisor’ personas relevant to the specific role and company (e.g., a hiring manager, an HR representative, etc). Each persona brings a unique viewpoint. See the image below for an example trace showing the prompt and resulting persona definitions.

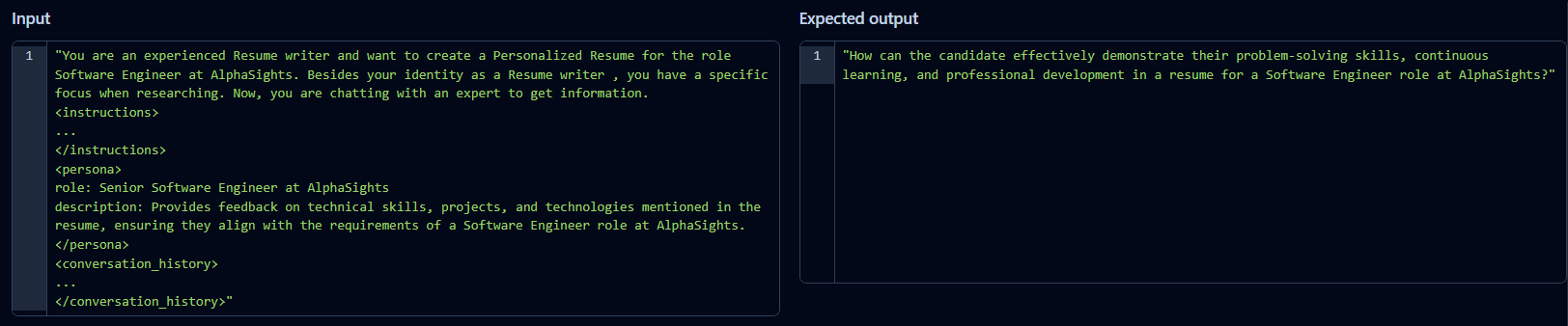

- Question Generation: Each persona, guided by its specific role and the conversation history, asks targeted questions to the Expert agent. These questions aim to get information needed to provide relevant advice for tailoring the user’s resume. the image below shows an example of a persona formulating a question.

-

Retrieval & Answer Generation:

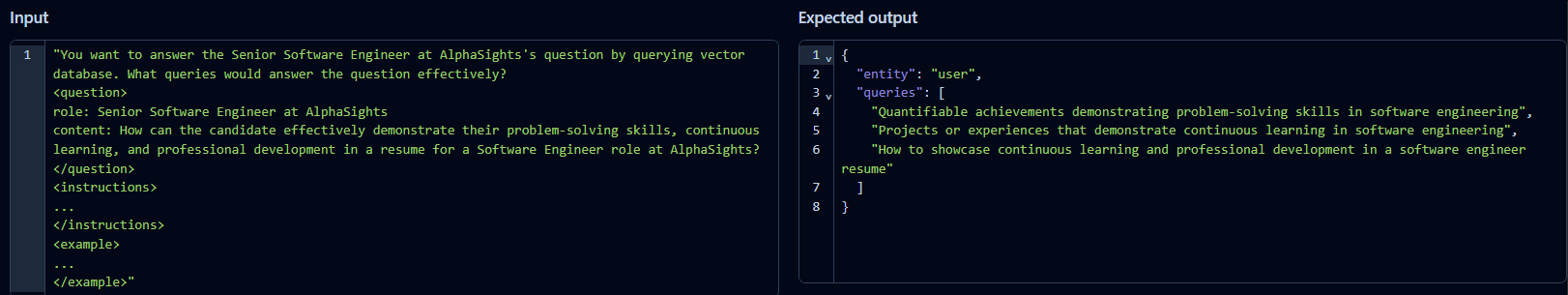

Query Formulation: The persona’s natural language question is broken down by an LLM into specific, structured queries suitable for retrieving information from the knowledge base from Phase 1. the image below **shows the question-to-query decomposition.

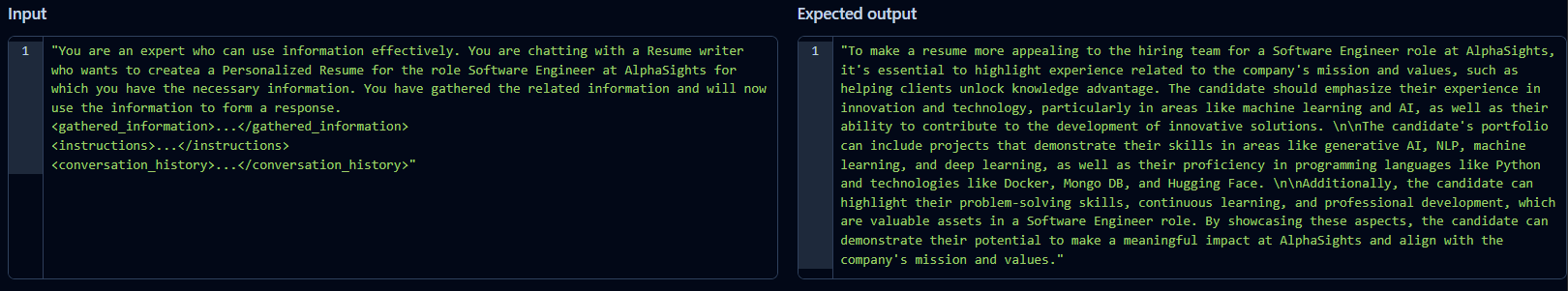

Contextual Answer Synthesis: The generated queries are used to get relevant sources from the knowledge base. The retrieved information is then provided as context to the Expert, who synthesizes a relevant answer to the persona’s question, considering the conversation history. the image below *shows the Expert’s resulting answer.*

This conversational loop typically repeats for a set number of iterations or until personas indicate they have sufficient information. The core logic is captured in the pseudo-code below:

# Pseudo-code

def storm(input_documents, job_description):

conversations = []

topic = f"Resume Writing for the {role} role at {company}"

# Generate personas

personas = get_personas(topic)

def conversation_simulation(persona):

conv_history = []

max_iterations = 10

for _ in range(max_iterations):

# Persona asks a question

question = get_questions(topic, persona, conv_history)

conv_history.append(question)

# Generate search queries

queries = get_search_queries(question)

# Retrieve sources

sources = query_knowledge_base(queries)

# Expert generates answer

answer = get_answers(topic, persona, conv_history, sources)

conv_history.append(answer_obj)

return conv_history

for persona in personas:

result = conversation_simulation(persona)

conversations.append({"persona": persona, "conversation": result})

return conversations

-

Report Writing: WriterEngine.post_workflow Module

Finally, we analyse the complete conversation histories generated by each persona interaction. The module derives key insights, identifies actionable feedback, and synthesizes this information from the multiple personas into a single, structured, and meaningful draft report for the user, providing concrete suggestions for resume improvement.

Implementation

Ghost writer was developed using the following key technologies, models, and services chosen to support its complex workflow while being able to run locally:

- Operating System:

- Ubuntu

- Programming Language:

- Python

- Large Language Models (LLMs):

- Primary Text Generation: Llama 3 70B - Selected for its strong instruction-following and coherent text generation capabilities, used for generating conversational agent responses.

- Structured Data Generation: Gemini Flash 2.0 - For tasks requiring reliable, structured outputs, such as generating persona definitions or extracting search queries. This division of labour leverages the strengths of different models while keeping costs minimal.

- Web Search Integration:

- Google Custom Search JSON API - Used to dynamically fetch relevant information about the company during the KnowledgeBaseBuilder phase, enriching the context beyond user-provided documents.

- Retrieval Augmented Generation (RAG) Component:

- Vector Store: Qdrant - Chosen for its efficiency and scalability as the vector database to store embeddings of the generated profiles and web search content, enabling fast retrieval of relevant context for the “Expert” agent.

- Observability and Tracing:

- Langfuse - Integrated to provide detailed tracing and debugging of the LLM calls and interactions throughout the workflow.

Result

Ghost Writer system successfully processes user inputs and generates detailed, structured reports aimed at guiding resume and cover letter optimization.

System Output:

- Generated Reports: The core output is a multi-section report providing suggestions across several key areas. As shown in the video demonstration, typical sections include:

- Targeted Keywords & Skills

- Company Values & Role Alignment

- Experience Section - Impact & Quantify

- Summary/Profile Enhancement

- Formatting & Clarity

- Contextual Adaptation: The content of the report adapts based on the specifics of the inputs and researching phase, aiming to provide relevant, contextual suggestions.

Video Demonstration:

The following is a video demo for the Ghost Writer workflow: uploading documents, system processing, and the generated report interface with specific suggestions for the resume and cover letter.

Future Work

While the system demonstrates the capability to generate these context-aware reports, a formal evaluation of the advice quality and its impact on resume effectiveness is currently underway and will be detailed in future work.

Conclusion

Ghost writer represents a practical exploration into applying advanced AI workflow concepts, specifically adapting the research-oriented STORM framework, to the highly personalized challenge of resume and cover letter tailoring. By simulating a multi-persona analysis grounded in user documents and real-time web data, it moves beyond generic advice to offer structured, context-aware guidance aimed at empowering job seekers.

The journey detailed in this post—from identifying the limitations of simpler AI approaches to implementing a AI workflow involving persona generation, iterative question-asking, RAG, and structured report synthesis—highlights the potential and the complexities of building sophisticated AI assistants. While the quality of the generated advice is yet to be evaluated rigorously, the current system demonstrates a functional pipeline for this complex task. Evaluation and its planning deserves its own post!

I hope it serves as a useful tool or an example for developers and researchers interested in AI workflows, and applying these techniques to real-world problems.

Thank you for reading!